From a talk on building domain-specific AI for genomics and what it means for researchers, clinicians, and patients

I gave my first public talk on Agentic Genomics last week. It was an hour and a half of ideas I’ve been building toward for two years since I started an MSc in AI & Digital Health, joined the Turing Institute as a Fellow, and began asking a question that most of my bioinformatics colleagues weren’t asking: What happens when AI agents do genomics, not just chat about it?

Here’s the core argument, and everything that follows from it.

Watch the full talk on YouTube

The chatbot era is already over

ChatGPT is impressive. Claude is impressive. But ask any of them to classify a pharmacogenomic variant and you’ll get a confident, fluent, wrong answer. They hallucinate star allele assignments. They invent drug interactions. They cite papers that don’t exist.

This is not a bug that will be fixed with a bigger model. It’s a category error. General-purpose LLMs are not designed to handle the precision that genomics demands. When you’re deciding whether a patient is a CYP2D6 poor metaboliser — and that decision determines whether codeine provides pain relief or respiratory depression mostly right is not good enough.

The answer isn’t better chatbots. It’s agents AI systems that don’t just talk, but act. Systems that route queries to verified, expert-curated tools. Systems that keep sensitive genomic data local instead of sending it to a cloud API. Systems that know the difference between what they can answer and what requires a specialist skill.

That’s what I mean by Agentic Genomics.

ClawBio: skills, not prompts

Two weeks before the talk, we launched ClawBio, the first bioinformatics-native AI agent skill library. The idea is simple: take published, peer-reviewed genomics methods and package them as executable skills that any AI agent can discover and run.

Not prompts. Not fine-tuned models. Skills each one a self-contained unit with pinned dependencies, reproducibility bundles, and safety guardrails. The AI routes you to the right skill. The skill does the science.

In two weeks: 2,000+ downloads, significant GitHub traction, community contributors from multiple countries, and the first external pull request merged within 48 hours.

This is not a research prototype. It’s infrastructure.

Why domain-specific agents win

During the talk, I made a point I want to repeat here because I think it’s the most important strategic insight in this space:

Genomics data is too sensitive for general-purpose cloud AI.

Your genome is the ultimate personal data. It’s immutable. It identifies your relatives. It reveals disease predispositions you might not want your insurer to know about. Sending it to OpenAI’s servers for processing is not just a privacy risk in many jurisdictions, it’s illegal.

Domain-specific agents solve this. They run locally. They process data on your machine. They use curated reference databases, not pattern-matched training data. And because they’re specialised, they can be validated against known truth sets in ways that general LLMs cannot.

The future of genomic AI is not one giant model that knows everything. It’s a constellation of specialist agents, each one an expert in a narrow domain, each one auditable, each one keeping your data where it belongs.

Five things I told the audience (and mean)

1. Deep intelligence is the bottleneck, not compute.

We have more processing power than we know what to do with. What we lack is the human judgment that decides which experiment to run, which variant matters, which signal is real. Call it gut instinct, call it clinical intuition, call it twenty years of staring at sequence alignments that deep intelligence is what AI should be amplifying, not replacing.

2. AI will not atrophy your brain.

I hear this fear constantly: If AI does the thinking, we’ll forget how to think. I don’t buy it. The calculator didn’t make us worse at mathematics it freed us to do harder mathematics. AI agents free you from the mechanical work of bioinformatics so you can focus on the cognitive work that only you can do. The questions that require not just analysis, but insight.

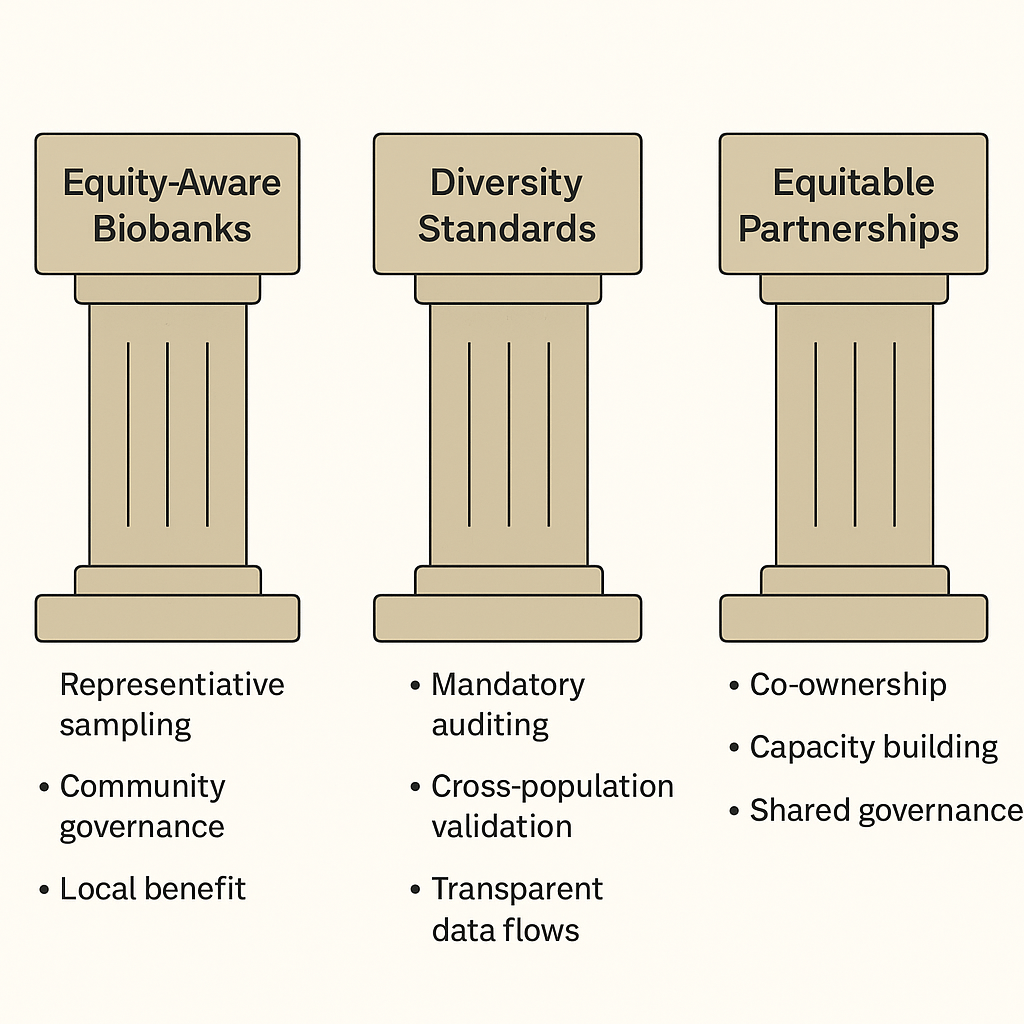

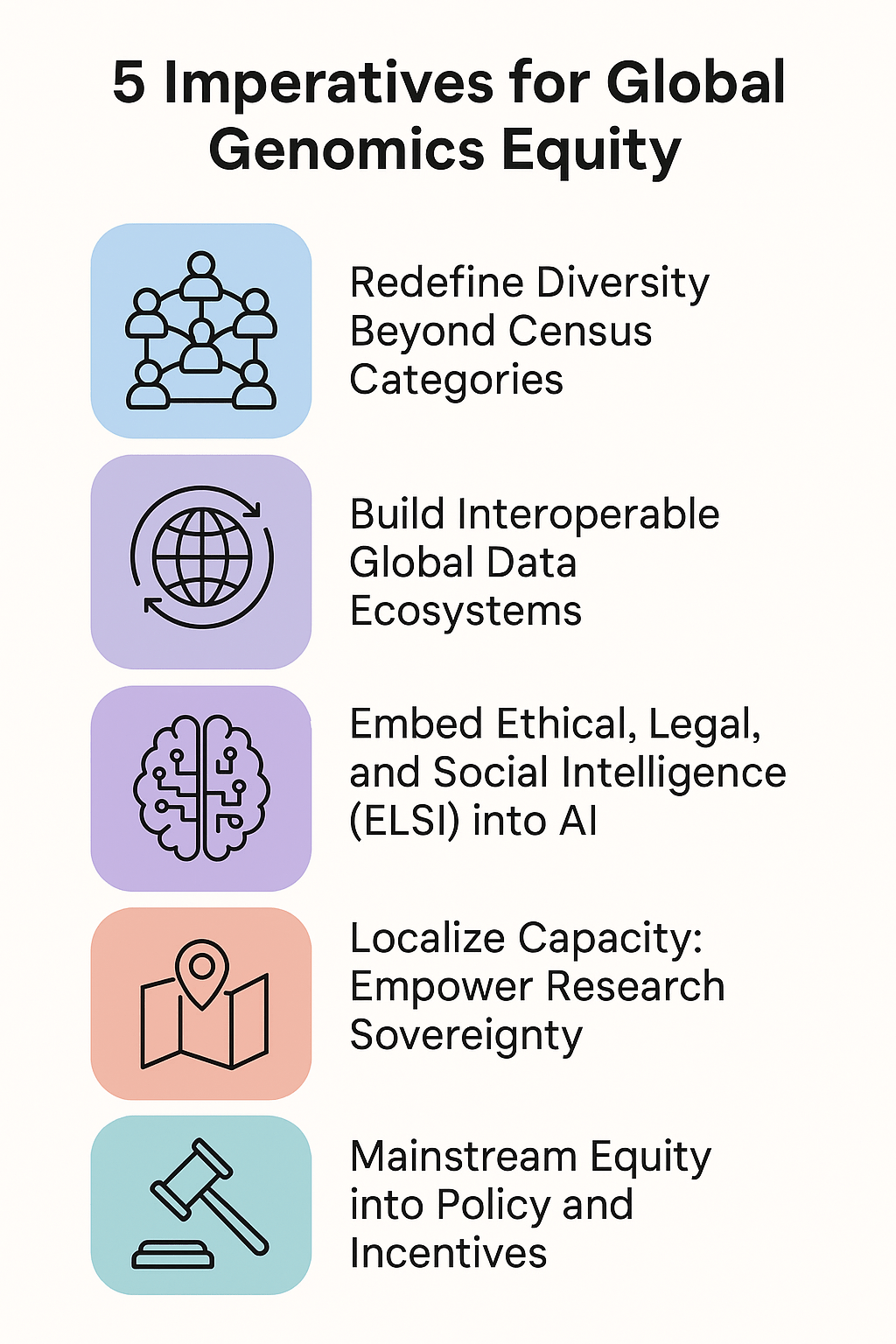

3. Equity is not optional, it’s existential.

If AI genomics tools are built by and for populations that are already overrepresented in reference databases, the disparity doesn’t just persist, it accelerates. Exponentially. Every model trained predominantly on European genomes makes the next model slightly worse for everyone else. We must build equity in from day one, not bolt it on later as an afterthought.

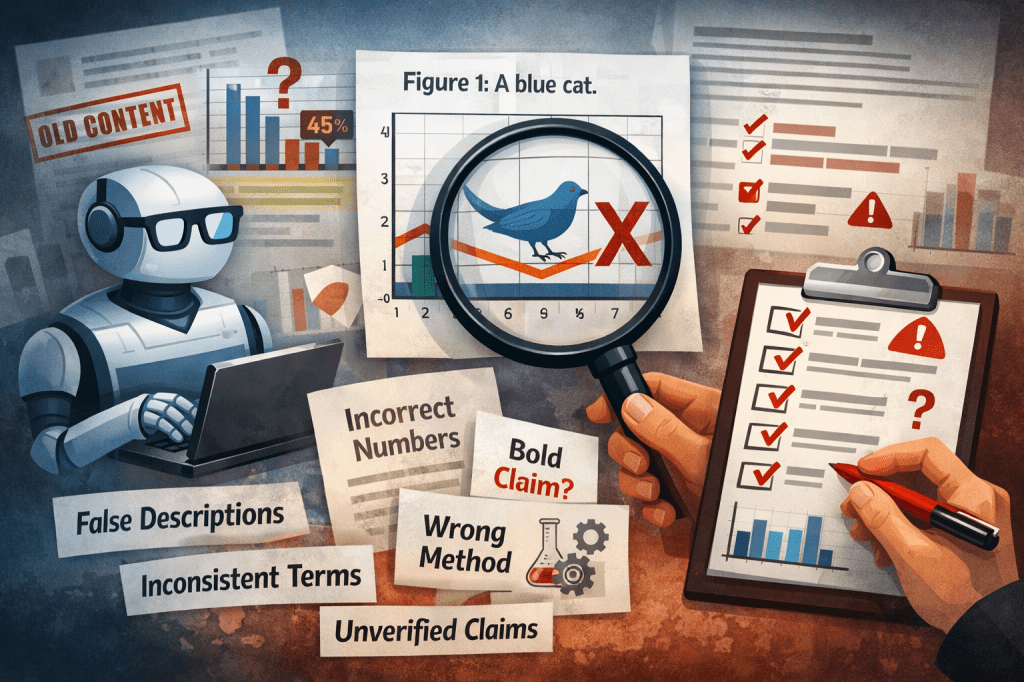

4. Always verify. Always.

LLMs fabricate figures. They invent references. They hallucinate author lists and journal names. I’ve seen models generate pharmacogenomic classifications that would cause prescribing errors. The rule is absolute: never trust an LLM output in genomics without independent verification. This is why ClawBio separates the routing layer (where the LLM operates) from the execution layer (where validated tools operate). Hallucination in the critical path is not acceptable.

5. Become the expert the LLM can’t be.

General LLMs are trained on the internet. They know a little about everything. Your competitive advantage, whether you’re a researcher, a clinician, or a bioinformatician is knowing more about your specific niche than any general model ever will. Go deep. Become the person whose judgment the AI augments, not replaces. That niche expertise is what makes your personal AI agent genuinely personal.

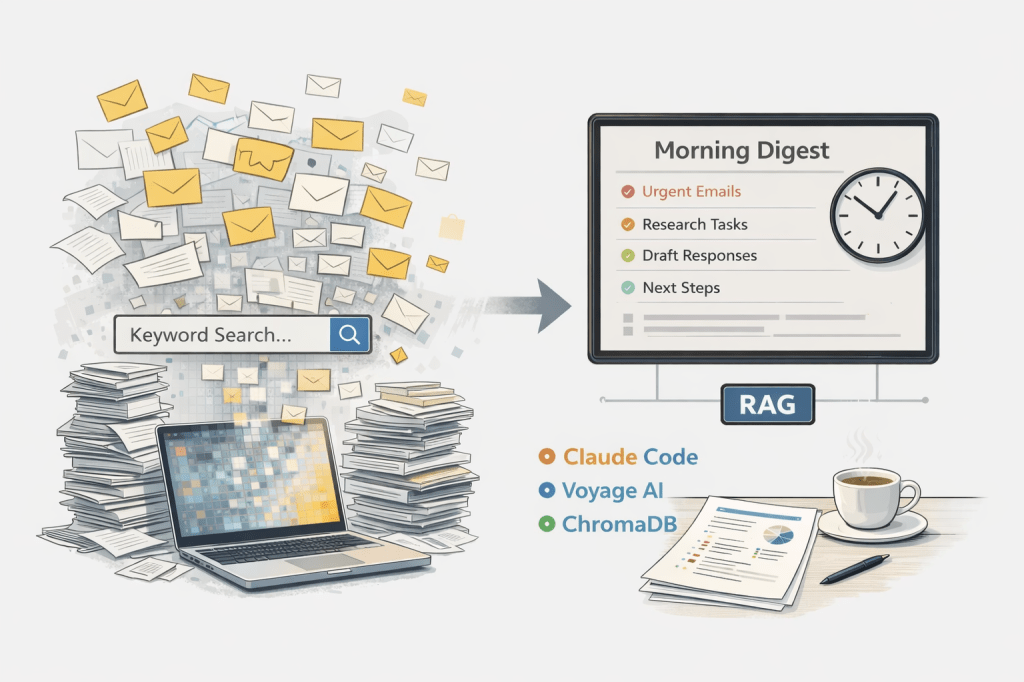

Everyone will have an agent

Here’s the prediction I’m most confident about: within five years, every working scientist will have a personal AI agent trained on their own context, their own papers, their own decision-making patterns. Not a chatbot you prompt from scratch each time. An agent that knows your research programme, your analytical preferences, your threshold for what constitutes a meaningful result.

I already have two: RoboTerri (Telegram) and RoboIsaac (WhatsApp). They share the same knowledge base. They can run the same ClawBio skills. They’re imperfect, early, and already indispensable.

The question for genomics is not whether this will happen. It’s whether the agents we build will be safe, equitable, and scientifically rigorous, or whether we’ll let Silicon Valley ship genome chatbots that hallucinate variant classifications and call it innovation.

The real purpose of AI

I ended the talk with something that isn’t fashionable in tech circles. The purpose of AI is not super-intelligence. It’s not super-abundance. It’s not replacing humans or maximising productivity metrics.

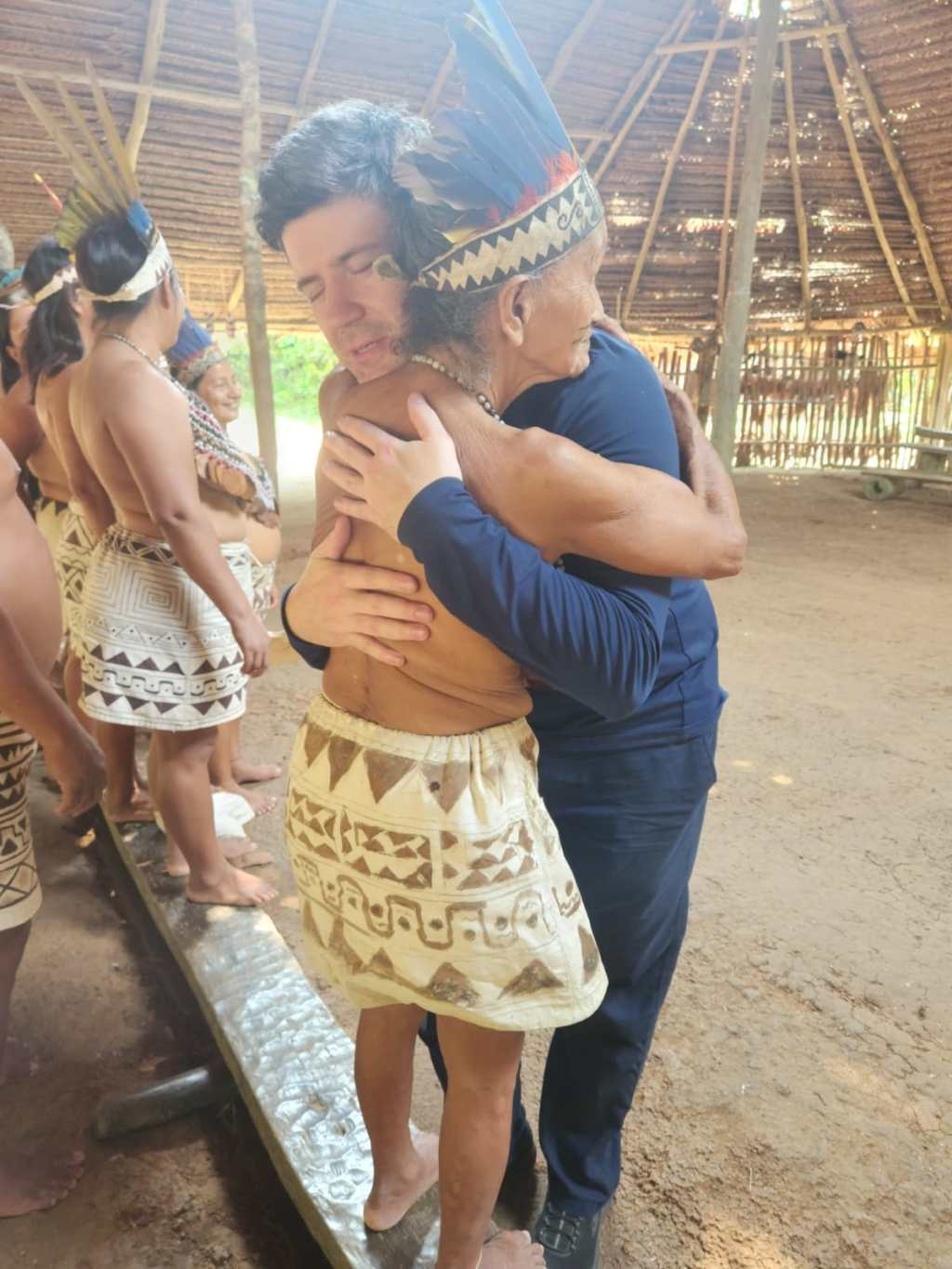

The purpose is enlightenment. Wisdom. Better decisions made by better-informed humans. AI that helps a clinician understand a patient’s pharmacogenomic profile before prescribing. AI that helps a researcher in Lima access the same analytical tools as a researcher in London. AI that helps you think more clearly about the problems that matter most.

That’s what Agentic Genomics is for. Not artificial intelligence. Augmented intelligence.

ClawBio is open source. – Website: clawbio.ai – GitHub: github.com/ClawBio/ClawBio – Slides: clawbio.github.io/ClawBio/slides

Manuel Corpas is a Senior Lecturer at the University of Westminster, former Turing Fellow, and creator of ClawBio. He writes weekly about AI and global health equity.

Leave a comment